Search pages, blogs, and news.

How AI Orchestration Layers are Functioning Like an OS in the Agentic Era

Publication Date

January 31st, 2026

As AI scales, compliance, security, and policy enforcement must be embedded directly into execution.

What Actually Matters.

Embed AI orchestration layers as core components of enterprise architecture for competitive advantage.

Ensure AI systems operate continuously and coordinate workflows across multiple systems.

As enterprises rush to embed AI into their products and workflows, the real competitive edge is no longer about where code is written, which large language model (LLM) is used, or how many agents are deployed. Instead, differentiation increasingly lies in who owns decisions, controls context, and can orchestrate AI reliably within real business systems.

AI orchestration involves coordinating, managing, and automating AI models, data pipelines, and infrastructure.

AI can no longer operate as a collection of tools or isolated agents. Enterprises are now demanding AI systems that run continuously, coordinate workflows across systems, operate under governance, and remain auditable by design.

For most, AI has reached an inflection point where it must operate continuously, triggering actions, coordinating workflows, and interacting with both humans and enterprise systems in the loop.

“On the ground, AI is no longer viewed as a loose collection of models, agents, or tools. Instead, enterprises, particularly at a serious production scale, expect AI to function as a system. In turn, there is a growing imperative to embed AI as a core component, or foundational fabric, of enterprise architecture rather than treating it as an add-on capability,” Sandeep Khuperkar, CEO and founder of AI innovation company Data Science Wizards (DSW), tells AIM.

Enterprises now have to confront a problem that has been lying dormant: the absence of a system lens for AI. While AI system orchestrators like LangGraph, AutoGen, and CrewAI provide powerful abstractions, deploying them at an enterprise scale introduces significant hurdles.

For instance, in a multi-step chain, if an agent fails at step seven, standard error logs often point to a generic framework internal rather than the specific prompt or tool call that failed.

A developer mentioned in a Reddit post that he used LangChain for orchestration, GPT-4 for reasoning, and custom tools built on FastAPI. “Observability is where it falls apart—LangSmith gives you traces, but when you’ve got 15 tool calls spread across a 10-minute run, correlating which decision corrupted downstream context is a nightmare,” he explains.

Aravind Jayendran, a data scientist and the CEO and founder of deep tech startup LatentForce, says the major pain point is flexibility. While LangChain make it easy to set up a prototype, he ends up being slowed down by including customisations while building a production system, including adding extra steps and tools for retrieval, context management, reranking, etc.

“This is quite hard to do with frameworks like LangChain, and with the advent of coding agents, it’s quite easy and better for us to build any agent harness we need ourselves,” Jayendran states.

Homegrown AI Orchestration

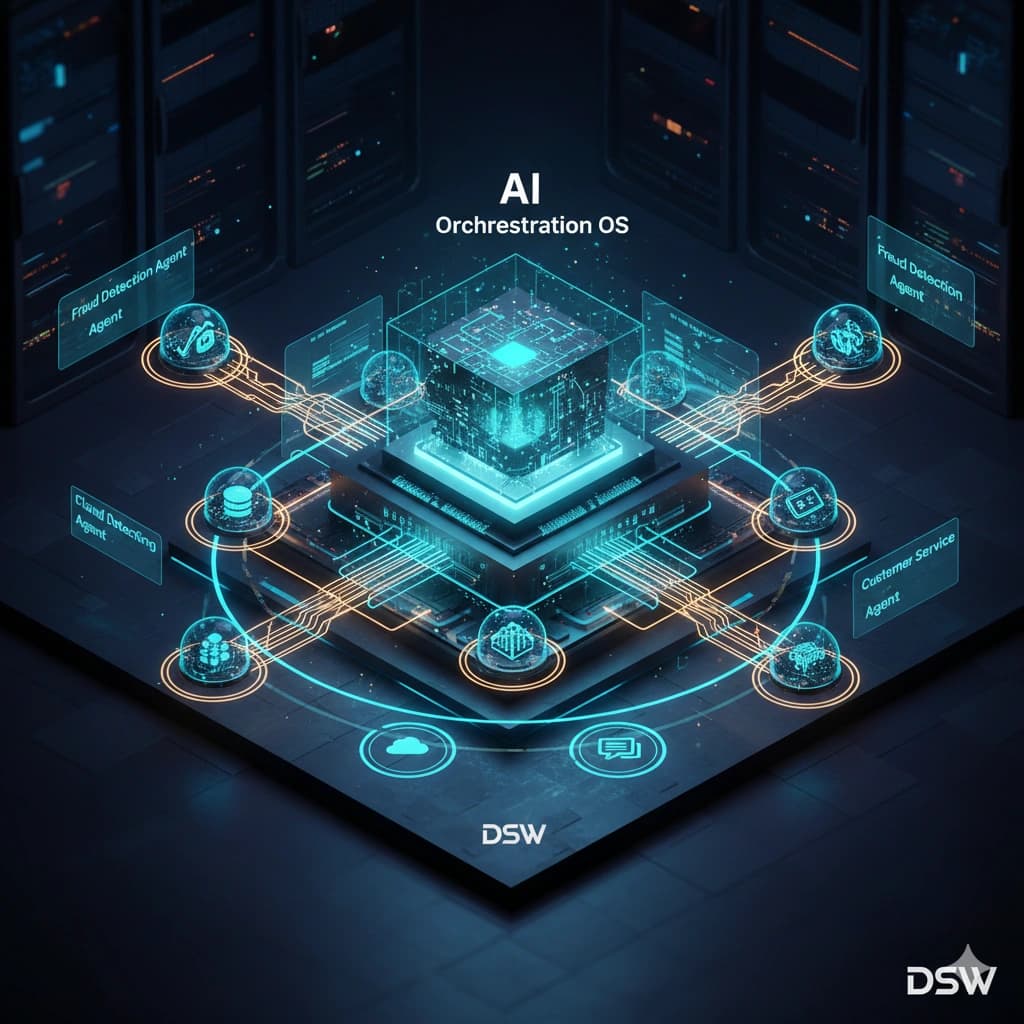

Soon, DSW will launch its enterprise AI operating system built in India.

The DSW enterprise AI operating system is a unified architecture in which UnifyAI (AI/ML runtime) and agentic AI (agent lifecycle management and orchestration) function as governed subsystems. Governance is embedded directly into the kernel, enabling policy enforcement, auditability, and reversibility by design rather than as afterthoughts.

The operating system is designed for flexible deployment across on-premises, private cloud, or hybrid environments, aligning with enterprise infrastructure requirements.

Crucially, enterprises retain full custody and control over their models, agents, workflows, and intellectual property, avoiding vendor lock-in. At the same time, the broader AI ecosystem can integrate seamlessly into the platform, while remaining firmly under enterprise governance and control.

Breaking the use-case trap

Another barrier slowing enterprise AI adoption is the economics of experimentation. Many organisations hesitate to test new use cases because each requires heavy budget justification.

“One of the biggest barriers is fear—not of technology, but of justifying budgets for each use case, ” Khuperkar adds.

This has led to a new operating model where enterprises are moving away from per-use-case pricing toward subscription-based AI systems. “We want to break the luxury of AI. One subscription, unlimited use cases.”

Equally important is ownership. Enterprises are increasingly rejecting vendor lock-in, particularly when AI becomes mission-critical.

“Everything built on our operating system—the agents, models, analysis, feature engineering—remains in the customer’s custody,” he says.

The analogy is clear: removing the AI operating system doesn’t take away ownership, but it does dismantle the structure that keeps everything coherent.

Technology shift patterns

However, history offers a clear picture. Compute required operating systems like Unix, Linux, and Windows. Networks demanded standardised protocols. Data centres needed orchestration layers to function at scale.

AI is now following the same trajectory.

What began as individual tools and models quickly evolved into frameworks and then platforms. But platforms alone are no longer sufficient.

“Enterprises are no longer treating AI as just a collection of models, agents, or tools. Serious enterprises want to operate AI as a system,” he explains.

That expectation fundamentally changes how AI is built, governed, and deployed. AI must now be embedded as a core fabric of enterprise architecture, not bolted on as an application. This realisation has driven the emergence of AI orchestration layers that function much like an operating system, governing how AI runs, scales, and interacts across the enterprise.

“When we say operating system, people immediately think of Linux or Windows. We’re not replacing them. We’re building an operating system on top of them—one that governs AI,” Khuperkar mentions.

The shift from platform to operating system is driven by real enterprise pressures. As AI scales, governance can no longer sit outside runtime. Compliance, security, and policy enforcement must be embedded directly into execution.

“Governance has to move into runtime—compliance as code, security as code, at the kernel level,” Khuperkar adds.

This is especially critical as agentic workflows begin crossing organisational and functional boundaries. Claims interact with underwriting, underwriting with forecasting, forecasting with propensity models, often across teams and systems.

“Multiple teams need to share the same control plane. And regulators need auditability by design, not by documentation,” he says.

One of the most striking realities emerging from enterprise deployments is that model choice is no longer the hard problem. LLMs are increasingly commoditised. The real challenge lies in orchestrating them reliably inside business processes.

Highly regulated environments such as banking and insurance cannot simply plug experimental models into production systems. AI decisions must be traceable, explainable, and continuously auditable.

“For every AI action, enterprises need to know: who initiated it, which agent acted, under what policy, on what data, and at what time,” Khuperkar remarks.

This level of accountability cannot be administered after deployment. It must be native to the orchestration layer itself.

From Pilots to Production – and real ROI

Despite widespread experimentation, most enterprises still do not run AI in serious production.

“Even today, 80–85% of companies are not running AI in production” he reveals.

The reason is simple, pilots are being run around with tools, not business outcomes. Enterprises test models without anchoring them to business purpose statements. One insurance CIO told Khuperkar: “I want to increase policy closure rates by 20% through my call centre.”

That goal cannot be solved with a single model or agent. It requires orchestrating sentiment analysis, propensity scoring, lead prioritisation, and workflow integration, end-to-end, in real-time.

New Role of Orchestration Layers

What is emerging is a new category of enterprise infrastructure—AI orchestration layers that act as the connective tissue between innovation and execution.

“We are bringing AI as a system to the industry,” he claims.

Differentiation will no longer come from having more models, more agents, or more demos. It will come from how well AI is orchestrated, governed, and embedded into real business workflows.

The winners will be enterprises—and GCCs —that stop thinking about AI as software and start operating it as infrastructure.

Our Coverage of AI Features

“Engineers must be willing to work with AI tools, understand their failure modes, and question outputs when something… Read more

From fraud detection to debt collection, narrow AI is the backbone of finance for precision and easy compliance. Read more

Thousands of Indian H-1B workers face prolonged visa delays and repeated rescheduling, even as other nationalities see… Read more

“Desktop supercomputing will make advanced AI engineering possible for anyone ready to explore.” Read more

Walmartʼs customer-facing AI agent, Sparky, represents the shift from search-based to decision- based shopping. Read more

See Pricing Details

Fill out the form